Not so long ago, interacting with artificial intelligence felt like talking to a very diligent but inattentive assistant. Models handled short explanations or the translation of individual sentences fairly well, but they “fell apart” over longer distances. As soon as you added several extra conditions to a request or stretched out the dialogue, the logic got lost, and details were forgotten. The user had to spend more time editing the result than formulating the task itself.

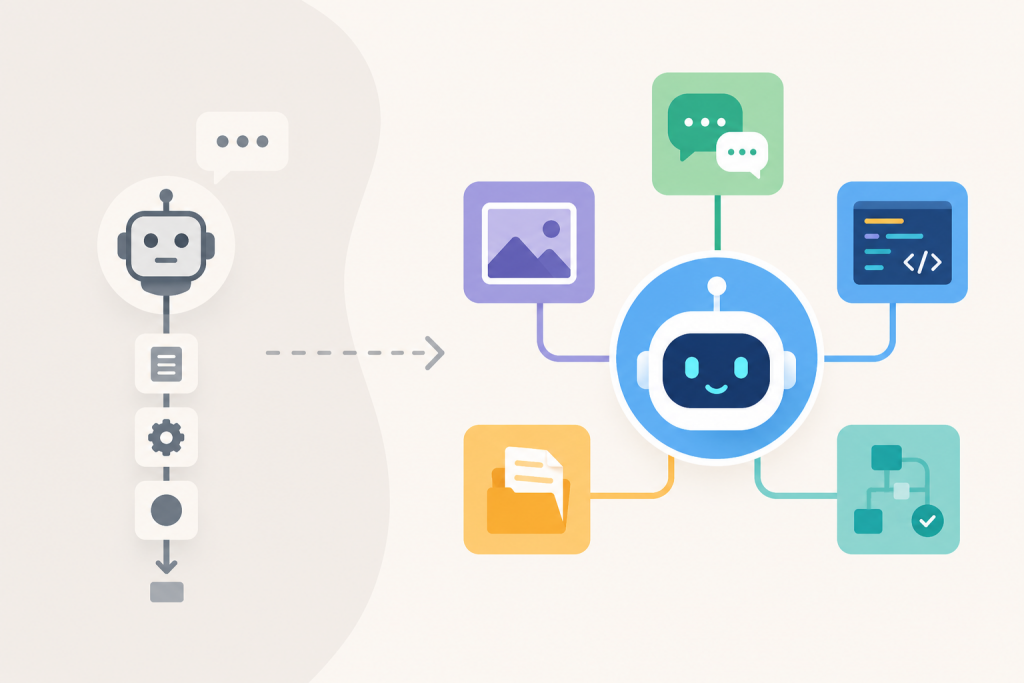

Modern models are gradually changing the very perception of AI – from a simple “chatbot” to a full-fledged digital agent. The recent release of GPT-5.5 by OpenAI became a telling example. This is not just another increase in power, but an attempt to create a system that understands the user’s intentions almost on an intuitive level. In the presentation, the developers emphasized that the model has become much more independent. Now it can be entrusted with a multi-part task with “variables” – for example, to conduct research online, analyze data, and immediately format it into a table or document. The model has learned to switch between tools, plan stages of work and, most importantly, check its own results for errors before showing them to the user.

Working with intent, not just text

Previously, success depended on how thoroughly you described each step. You had to literally “lead the model by the hand,” specifying the style, structure, and limitations. The slightest inaccuracy in the wording – and AI produced a generic text that had nothing to do with real needs.

New models have learned to read context better. Instead of a simple “write an article,” the system is able to process a request while taking the specifics of the brand into account, independently measuring the complexity of terminology for a particular audience. Human control remains, but it moves to the level of evaluating the result, rather than micromanaging every sentence. AI has started to see the internal connections within a task and suggest a way to solve it, which is especially noticeable in GPT-5.5: it demonstrates better logic under ambiguity, trying to find the most rational way out when there is not enough input data.

Context reliability and “long memory”

Context is the foundation of any serious work, whether it is a technical specification or the history of a correspondence. Older models had short memory. By the end of a dialogue, they could completely ignore the conditions you voiced at the very beginning, simply because those conditions were pushed out by new tokens.

Now working with large volumes of information has become more stable. This is critical for checking documentation for contradictions or analyzing branched codebases. The model keeps all input data in view at the same time. This makes it possible to find small mistakes in the logic of a technical specification or prepare conclusions based on a dozen different sources without the risk that, halfway through the process, AI will “forget” what was discussed on the first page.

Programming and technical challenges

In software development, perhaps the most noticeable leap has taken place. If earlier AI helped write an isolated function, now it is better at navigating the architecture of an entire project. This is about understanding how a change in one file will affect the work of other parts of the system.

Developers are increasingly using AI for refactoring, finding the causes of failures, and writing tests. An important aspect is the development of the agentic approach. The model does not simply produce a piece of code, but tries to plan actions, check the result, and independently correct its own mistakes in the process. This does not replace the programmer, but it removes a huge layer of routine from their work. At the same time, the speed of new models remains high, which allows them to be integrated into the daily workflow without unnecessary delays.

Deep analysis of data and tables

Business processes usually get bogged down in unstructured information: reports, tables, reviews. Previously, AI gave only superficial summaries that were difficult to use in work. Today’s tools are able to compare data from different formats, extract what matters, and structure the chaos.

In practice, this means quickly processing hundreds of customer requests to detect systemic problems or automatically turning raw numbers into a logical report. Teams that work with analytics get a tool that does not merely sort data, but helps them see trends behind it.

Focus on the result

Modern AI has stopped being just a text window. It integrates with other tools, allowing not only the discussion of a task, but also its execution: creating ready-made files, performing calculations, comparing document versions. This is the path from “giving advice” to “performing part of the process.”

However, it is worth remembering the limits. Despite the progress, AI remains a probabilistic model. It can make mistakes in numbers, misinterpret specific legal nuances, or invent facts where it lacks knowledge. In critical fields – medicine or security – the final word must always remain with a specialist. AI is ideal for creating drafts, structuring thoughts, and speeding up work, but responsibility for risks and strategic vision remains with the human. The main value of new tools is not in their flawlessness, but in their ability to be a truly useful partner in complex work cycles.